AI is powerful - human judgment makes it better.

When we decided to make AI detection software we committed to use human-in-the-loop processes in AI TruthTeller®. This meant we wanted a human somewhere in the middle of the process for all judgments rendered. Artificial intelligence is great, but human judgment, when combined with sophisticated AI tools is superior to an AI only approach. In our world, humans remain responsible for judgment, risk and accountability. We want to help you make better decisions.

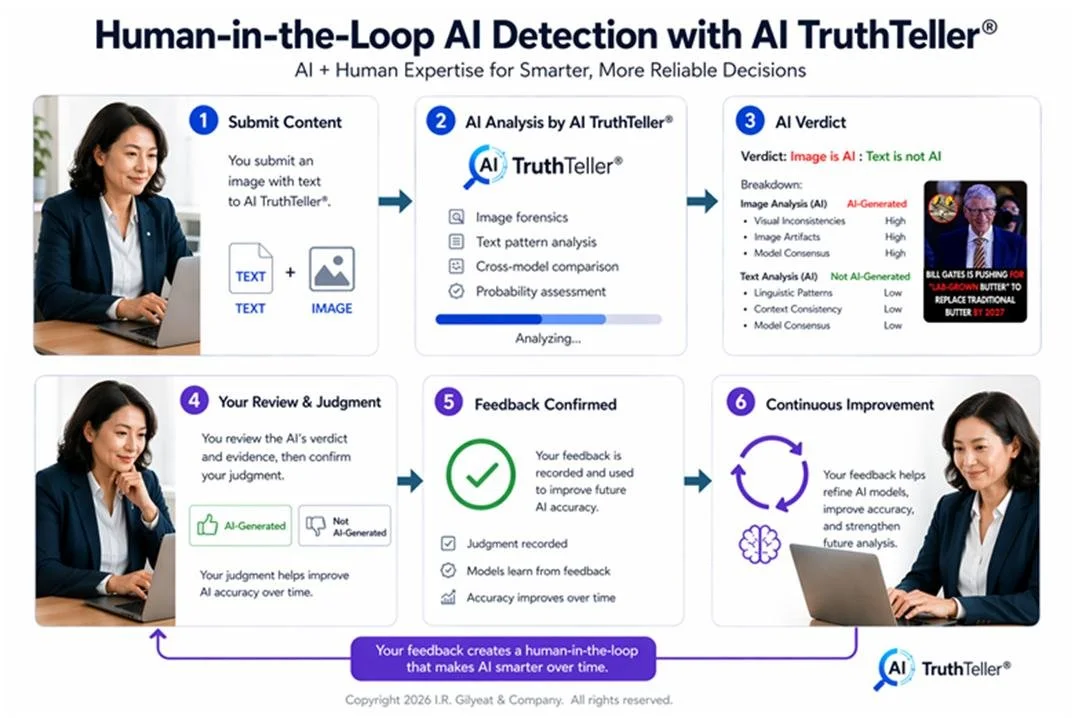

The image below shows how you, as a user of AI TruthTeller, are front and center in decision making and in providing immediate feedback to our AI detection platform. If the detection looks good to you, thumbs up! If the detections looks bad, then thumbs down. Every time you give feedback, we use that judgement to refine the model and improve ongoing accuracy.

In addition to the first review by you, a human user, we insert a second human review in the middle of all data submissions. Images are encrypted so we don’t see images, although human analysts review outcomes. All text is part of our human-in-the-loop process. Logs are reviewed, suspected judgment errors are researched, validated and corrected if necessary. Models are refined, tuned and updated on a regular basis. Our data analysts meet frequently with our software engineers to identify, review and problem solve the nuances of online human interactions. Short-form conversations and online social interactions create a unique set of problems for AI detection. They are difficult and nuanced at best.

Benefits of keeping humans in the loop

Let’s start with ambiguity. Humans are funny creatures. We speak many languages, use hand gestures, eye contact and visual images to communicate what we want and need. Translating all of that into clear and concise words is difficult. We might say one thing but mean something entirely different. Context is critical to understanding and people know the nuances of context better than AI.

Responsibility. People are responsible for their own actions, or at least they should be. AI may have superior recall, deductive reasoning and logic but people make covenants, sign contracts and have moral obligations. People have emotions and care about other people. AI does not. It is software, wrapped in silicone without responsibility and obligation. At best, AI is man made and can only do the bidding of its creators.

Accountability. It’s hard to hold AI accountable for its actions when it has no moral compass, no soul and no ability to act on it’s own. Humans are agents to act whereas AI is an agent to be acted upon. Humans cannot abdicate accountability to a machine or a technology stack of software and hardware with it is humans that have created AI in the first place. Keeping people in the loop when decisions are made helps us remember that we as individuals are accountable for the decisions we make or allow to be made by the technology we have organized and assembled.

Trust comes with time and experience. The more you use AI TruthTeller with positive outcomes, the more your trust increases. This is a natural outcome but it’s important to know these positive outcomes are built on your shared experience, good judgment and the judgment of others. The wisdom of the crowd teaches us that diversity, scale and independent thought yields a better result than one person with limited experience and even bias. AI TruthTeller gains enormous benefit by allowing every user to independent judge the output they individually receive. There is no collective thinking only the aggregated judgements of every human in the decision making loop.

When dealing in person-to-person human relationships, as we do in text messaging, email and social media, it’s essential to have live humans anchored in the decisions. it is a cold and heartless world when we abandon the realities of the human heart. Keeping humans-in-the-loop protects this territory and improves the total AI experience.